At a Glance

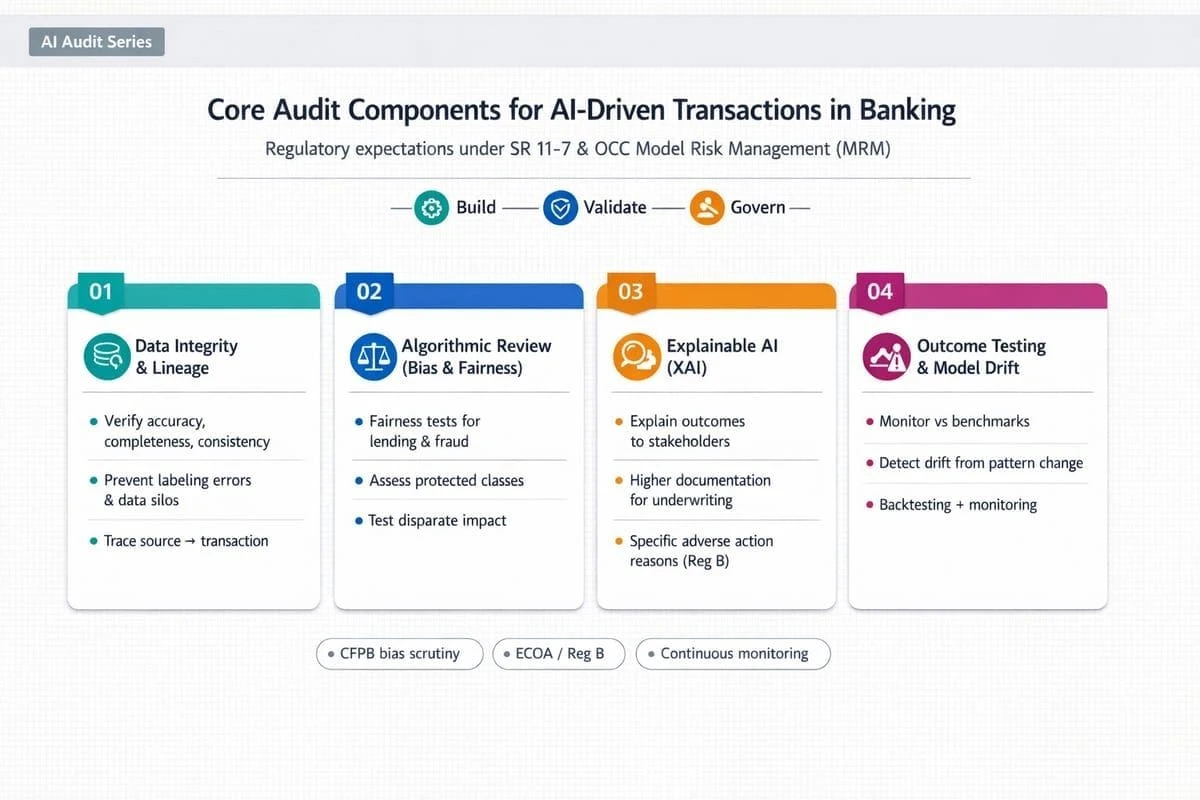

Auditing AI-driven transaction decisioning now depends on decision-level reconstructability through data lineage, fairness evidence, explainability artifacts, and continuous monitoring that can withstand supervisory and dispute scrutiny.

From exploratory pilots to governed intelligence

As AI shifts from experimentation to embedded transaction decisioning, audit leaders are being asked to treat models and their supporting data flows as critical infrastructure rather than analytical tooling. The control question changes. Model performance in aggregate is no longer sufficient when the risk is a disputed outcome, a fraud screening miss, or a payments exception that creates liability and reputational exposure.

Supervisory expectations are converging on a practical standard that is already familiar in other high-risk bank domains: decisions must be traceable, explainable, and governable at production velocity. In transaction environments, that requires evidence that is created at the time of decision, retained in a durable form, and consumable across the lines of defense. Where AI introduces opacity or accelerates change, audit scope must expand to cover the controls that make those characteristics manageable.

Core audit components and 2026 requirements

Data integrity and lineage

Data remains the dominant determinant of auditability. For AI-driven transaction decisioning, auditors increasingly expect banks to demonstrate real-time data readiness rather than relying on periodic batch reconciliation and after-the-fact documentation. This includes the ability to reproduce the exact training and calibration inputs, feature definitions, and transformations that were used at a specific historical point in time.

Framing integrity through ALCOA+ clarifies what evidence must look like in practice. Data changes should be attributable to accountable owners, legible and inspectable through standardized identifiers, contemporaneously captured as decisions are made, original in the sense that raw inputs are retained alongside derived features, accurate through validation controls, and complete through controls that prevent silent edits or missing fields from propagating into model behavior.

Audit testing should focus on whether lineage is captured by design across batch and streaming paths, and whether lineage extends through vendor platforms, managed feature stores, and shared data products. Outsourcing does not outsource accountability for evidence. When banks rely on versioning approaches to bind data, code, and feature logic, the audit objective is not the tooling itself but the reconstructability it provides under exam pressure.

Governance expectations are also tightening through ecosystem requirements. Where counterparties or market utilities require documented AI governance policies beginning in 2026, audit should confirm that lineage requirements translate into enforceable control obligations rather than remaining policy statements.

Algorithmic review for bias and fairness

Fairness is becoming a control requirement rather than an ethical debate, particularly where AI influences credit, fraud, identity, or collections decisions that can create disparate outcomes. Audit scope should begin with governance posture: whether the bank has defined fairness objectives consistent with applicable law and product strategy, and whether those objectives are translated into measurable tests, thresholds, and remediation triggers.

Testing protocols need to be repeatable and reviewable. Auditors should assess whether performance is evaluated across relevant segments on an appropriate cadence, whether drift monitoring includes disparate-impact signals, and whether mitigation techniques used during training and calibration align with approved policy. The goal is not to eliminate all differences across groups but to ensure that differences are understood, justified where permissible, and addressed when they indicate control breakdown or unintended proxying.

State-level requirements add operational complexity by increasing expectations for documented impact assessment. Audit teams should therefore test whether the bank can produce an annualized view of material harms and mitigation effectiveness without relying on manual, fragile processes.

Explainable AI as a release gate

Explainability is shifting from optional transparency to a production gate in critical workflows. The audit objective is decision defensibility: whether the bank can explain why a specific transaction was flagged, why a customer was stepped up for verification, or why a credit outcome changed, using rationale that is consistent with policy and stable enough to support dispute resolution.

Counterfactual explanations are often the most operationally useful because they translate model behavior into controllable levers under the same policy constraints. Audit should examine whether explanations are generated at decision time, retained with the decision record, and presented in a form that investigators and customer-facing teams can use without misinterpretation. If explanations are overly technical, unstable across re-runs, or inconsistent with policy, the control intent is not met even if explanations exist.

Human-in-the-loop governance should be tested as an operating model control, not a label. Escalation thresholds must be explicit, overrides must be controlled, and reviewer behavior must be auditable. Otherwise, human review can become an ungoverned exception path that introduces new bias and weakens accountability.

Outcome testing and continuous monitoring

Transaction decisioning environments force audit programs to move from episodic review to continuous verification. AI behavior can change quickly with data drift, adversarial adaptation, upstream product changes, and model refresh cycles. Outcome testing should therefore connect to control-relevant benchmarks such as fraud catch and release patterns, loss rates, complaint signals, operational error rates, and policy exception patterns that indicate a breakdown rather than expected volatility.

Monitoring must be paired with auditable safeguards. Circuit breakers should be treated as formal controls with defined activation criteria, independent logging, and tested fallback paths to rule-based or more conservative decisioning when confidence degrades. A common failure mode is that fallback states are defined on paper but are not operationally ready under real transaction load or do not preserve decision evidence at the same standard.

Network rule changes also influence audit scope. Where ACH monitoring obligations tighten in March 2026, banks need audit-ready evidence not only that monitoring occurred but that responses were timely, consistent, and aligned to a documented risk basis.

Key resources for implementation

Implementation succeeds when banks reconcile multiple frameworks into a single coherent operating model with clear ownership and consistent evidence standards. The most common failure pattern is adopting guidance in parallel without resolving overlaps in accountability, change control cadence, and evidence retention across fraud, payments, technology, and compliance teams.

Resources such as the Cyber Risk Institute financial services framing can help translate NIST-style practices into controls that reflect sector realities such as resilience and third-party dependence. The FFIEC IT Examination Handbook remains central for grounding AI-adjacent controls in established expectations for technology risk management, data governance, and audit evidence. Professional guidance from audit bodies also supports the design of testing protocols that are disciplined without collapsing into tool selection or vendor-driven assumptions.

Benchmarking audit readiness for AI transaction decisioning

Executives need decision confidence that AI-driven transaction decisioning is operating within risk appetite and that evidence will withstand supervisory challenge. That confidence depends on measurable maturity across the same components audit teams must test: whether lineage can be reconstructed at decision level, whether fairness testing is embedded into release and monitoring routines, whether explainability artifacts are usable across the lines of defense, and whether circuit breakers and fallback paths are tested and operationally credible.

Assessing these capabilities as an integrated system is often more reliable than reviewing them as isolated control statements because constraints and trade-offs accumulate across domains. Legacy batch dependencies can weaken real-time evidence, vendor opacity can fragment lineage, and fragmented governance can turn monitoring into activity rather than assurance. Used to benchmark these dimensions, the DUNNIXER Digital Maturity Assessment supports executive sequencing decisions by clarifying where readiness is strong, where it is fragile, and where control investment most reduces decision risk without slowing delivery through documentation that does not improve defensibility.

References

- https://www.retailbankerinternational.com/comment/2026-bfsi-shifts-modernisation-ambition-governed-intelligence/

- https://home.treasury.gov/news/press-releases/sb0401

- https://auditboard.com/blog/bank-regulatory-compliance

- https://www.aicpa-cima.com/professional-insights/article/ai-auditing-strengthens-internal-controls-compliance-and-trust

- https://www.retailbankerinternational.com/comment/2026-bfsi-shifts-modernisation-ambition-governed-intelligence/#:~:text=In%202026%2C%20organisations%20will%20evolve,UX%20for%20compliance%2Dheavy%20tasks.

- https://www.emburse.com/resources/ai-fraud-detection-in-banking

- https://www.linkedin.com/pulse/explainable-ai-longer-optional-banking-insurance-sahil-kataria-uubcc#:~:text=Across%20banking%20and%20insurance%2C%20the,used%20in%20critical%20financial%20workflows.

- https://www.aicpa-cima.com/professional-insights/article/ai-auditing-strengthens-internal-controls-compliance-and-trust#:~:text=Brown%20CPA%2C%20CGMA%2C%20MBA%20Director,stronger%20internal%20controls%20and%20compliance.

- https://home.treasury.gov/news/press-releases/sb0401#:~:text=Building%20on%20this%20shared%20foundation,%2C%20explainability%2C%20and%20data%20practices.

- https://www.retailbankerinternational.com/features/industry-leaders-give-their-take-on-year-ahead/#:~:text=Regulation%20becomes%20the%20defining%20force,evidence%20trust%20will%20grow%20fastest.

- https://www.lexology.com/library/detail.aspx?g=9c949782-1bbd-4010-bcaf-0799a7c33b3a#:~:text=With%20the%20exponential%20growth%20of,may%20inadvertently%20create%20impermissible%20disparities.

- https://www.kyriba.com/resource/nacha-2026-fraud-monitoring-faqs/#:~:text=1:%20What%20are%20the%20Nacha,passive%20observation%20to%20active%20compliance.

- https://scouts.yutori.com/988682b6-560d-447c-8b8b-f9c3b0872f45#:~:text=AI%20governance%20focus%20with%202026,on%20guidance%20and%20framework%2Dsetting.

- https://blogs.cfainstitute.org/investor/2026/02/11/ai-is-reshaping-bank-risk/#:~:text=Explainability%20must%20be%20embedded%20in,can%20be%20explained%20to%20regulators.&text=Data%20is%20the%20lifeblood%20of,privacy%2C%20consent%2C%20and%20cybersecurity%20safeguards

- https://nysba.org/regulating-ai-deception-in-financial-markets-how-the-sec-can-combat-ai-washing-through-aggressive-enforcement/#:~:text=Meanwhile%2C%20the%20Consumer%20Federal%20Protection,for%20medical%20chatbots.%5B20%5D

- https://intuitionlabs.ai/articles/data-integrity-ai-alcoa-framework#:~:text=The%20data%20must%20be%20complete,exist%2C%20they%20are%20clearly%20linked.

- https://www.itemize.com/nachas-2026-rule-changes-are-coming-fast-heres-how-ai-can-help-banks-corporates-and-fintechs-survive-them/#:~:text=Verify%20payment%20data%20using%20validated,widespread%20preparation%20to%20begin%20now.

- https://auditboard.com/blog/bank-regulatory-compliance#:~:text=The%20FFIEC%20recently%20refreshed%20the,primary%20focus%20of%20upcoming%20examinations.

- https://asurityadvisors.com/2026-risk-outlook-managing-uncertainty-across-a-shifting-risk-landscape/#:~:text=Technology%2C%20data%2C%20and%20model%20risk,take%20advantage%20of%20technological%20innovations.

- https://www.latentbridge.com/insights/the-most-important-ai-trends-for-banks-in-2026-what-will-actually-change-in-operations-compliance-and-risk#:~:text=%E2%80%8DConclusion,Trends

- https://www.mexc.co/en-PH/news/779328#:~:text=Colorado%20Artificial%20Intelligence%20Act%20(SB,and%20documentation%20about%20AI%20systems.

Reviewed by

The Founder & CEO of DUNNIXER and a former IBM Executive Architect with 26+ years in IT strategy and solution architecture. He has led architecture teams across the Middle East & Africa and globally, and also served as a Strategy Director (contract) at EY-Parthenon. Ahmed is an inventor with multiple US patents and an IBM-published author, and he works with CIOs, CDOs, CTOs, and Heads of Digital to replace conflicting transformation narratives with an evidence-based digital maturity baseline, peer benchmark, and prioritized 12–18 month roadmap—delivered consulting-led and platform-powered for repeatability and speed to decision, including an executive/board-ready readout. He writes about digital maturity, benchmarking, application portfolio rationalization, and how leaders prioritize digital and AI investments.