At a Glance

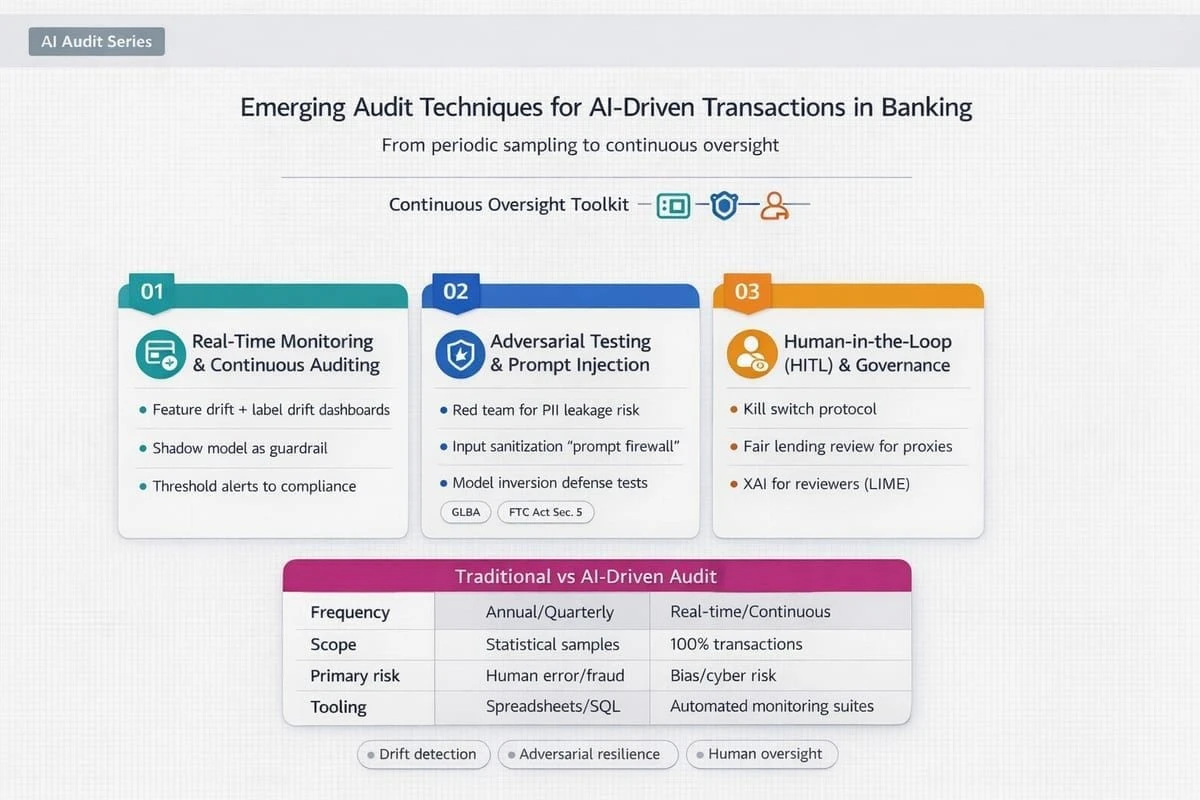

Continuous auditing for AI-driven transactions now depends on real-time monitoring, explainability artifacts, adversarial testing, and human checkpoints that make each automated decision reconstructable and defensible.

Continuous assurance becomes the default audit posture

AI-driven transaction decisioning is compressing the window between detection, decision, and settlement. As a result, internal audit programs are shifting away from point-in-time sampling toward continuous assurance models that can surface exceptions while corrective action is still possible. The executive issue is control latency: when monitoring and escalation operate on weekly or monthly cycles, model-driven errors can scale into customer harm, fraud loss, or payments disruption before governance can respond.

This shift changes what “sufficient audit evidence” looks like. Instead of relying primarily on policy documents and retrospective test results, auditors increasingly need machine-verifiable evidence streams that show how controls operated for real decisions in production. That evidence has to be consumable across the lines of defense, consistent with model risk governance, and resilient to frequent model and data updates.

Real-time monitoring and agentic assurance

Real-time analytics make it feasible to monitor transaction populations rather than small samples, but the audit design must separate signal from noise. Effective programs define a narrow set of control-relevant indicators that align to risk appetite and that can be tied to accountable owners. In practice, this means prioritizing indicators such as policy exceptions, unusual concentration patterns, or control bypass events over generic anomaly scores that cannot be investigated within operational capacity.

Agentic AI is being positioned as a continuous compliance layer that flags exceptions before settlement, but audit must treat these agents as part of the control environment rather than as advisory tooling. The key questions are whether the agent’s authority boundaries are explicit, whether the actions it can take are logged and replayable, and whether its outputs are governed as decisions or as decision support. Without those disciplines, agentic workflows can quietly create a parallel decisioning channel that is difficult to supervise.

For audit leaders, the practical implication is evidence engineering. Monitoring outputs, triage decisions, and remediations must be bound together into a single trail that explains not only what was detected, but what was done and why. If the control story cannot be reconstructed end to end, continuous monitoring becomes operational activity rather than assurance.

Explainability as an audit artifact

Explainable AI is moving from a compliance talking point to an auditable artifact requirement. When an automated system denies, flags, or delays a transaction, banks must be able to provide a rationale that is stable enough to support customer disputes and supervisory review. Audit should evaluate whether explanations are generated consistently at decision time, retained with the relevant transaction record, and understandable to investigators who need to validate whether the outcome was appropriate under policy.

Explainability also functions as a quality control for model changes. If explanations materially shift after a model refresh without a corresponding policy change, that is an early indicator of hidden feature drift, data leakage, or unintended proxying. Treating explainability outputs as part of the change-control evidence package helps management detect those issues before they surface as external complaints.

Adversarial resilience becomes an audit domain

As models are embedded into transaction workflows, threat actors adapt. Audit programs therefore need explicit procedures for vulnerabilities that are unique to AI-enabled controls, including prompt injection, indirect prompt manipulation, and data poisoning. The intent is not to turn audit into a security function, but to ensure that the control environment anticipates realistic abuse paths that could cause decisioning errors or confidential data exposure.

Red team simulations are increasingly used to test whether malicious inputs can bypass filters, override guardrails, or induce unsafe actions by agentic components. Auditors should focus on whether testing is repeatable, whether it covers both the model layer and the surrounding orchestration, and whether remediation is tracked with the same rigor applied to traditional control findings. Where automated tools generate evolving adversarial prompts, banks also need governance for who can run them, where results are stored, and how quickly fixes are deployed.

Validation layers matter because they define the bank’s practical ability to detect tampering. Auditors should examine whether the bank scans for anomalous payloads, unexpected tool calls, or suspicious feature patterns that indicate data poisoning attempts. Equally important is operational readiness: detection that cannot be acted on within the transaction lifecycle does not materially reduce risk.

Human checkpoints and dispute rights reshape operating models

Regulatory and supervisory expectations in 2026 are converging on a simple operational principle: high-stakes automated decisions require a human checkpoint. In practice, this is often implemented through confidence scoring and routing, where low-confidence outcomes are escalated to trained investigators. Audit should test whether confidence thresholds are justified, whether escalation queues are adequately staffed, and whether human reviewers receive sufficient context to make consistent decisions rather than rubber-stamping automation.

Customer dispute handling is becoming an explicit control requirement. When customers challenge an AI-driven outcome, the bank must be able to perform a formal human review, supported by retained decision evidence and clear policy interpretation. Audit should evaluate whether dispute processes are integrated with model monitoring and issue management so that repeated disputes trigger model and control review rather than remaining isolated casework.

Governance structures are responding by treating AI agents as digital team members with defined roles, delegated authority, and performance accountability. That framing is useful for audit because it forces clarity on job design, segregation of duties, and supervisory oversight. If an agent can initiate an action, the operating model must specify who is responsible for its behavior and how that responsibility is evidenced.

2026 regulatory signals that influence audit planning

The U.S. Treasury’s Financial Services AI Risk Management Framework, released in February 2026, reinforces expectations that banks manage AI through structured risk identification, governance, and operational resilience practices rather than through ad hoc model reviews. For audit, the immediate impact is scoping discipline: programs should map continuous monitoring, explainability, and human checkpoints to defined risk outcomes and show how governance detects, escalates, and remediates issues within risk appetite.

Supervisory focus is also concentrating on the largest institutions. The OCC’s proposal to raise the asset threshold for applying Heightened Standards to $700 billion signals a narrower target set, but it also raises the bar for what “credible governance” looks like among the banks that remain in scope. For those institutions, audit planning needs to assume deeper scrutiny of end-to-end decisioning chains, including third-party dependencies, model change velocity, and evidence quality under stress conditions.

Building executive confidence in continuous AI assurance decisions

Continuous assurance requires more than new monitoring tools. Executives need to know whether the organization can operate, evidence, and govern real-time audit techniques across data, models, and human review capacity. A digital maturity assessment supports that judgment by benchmarking capability in areas such as real-time control telemetry, explainability retention, adversarial testing discipline, and escalation operating model readiness, and by clarifying where trade-offs are being made between speed of automation and defensibility.

In banks where control evidence is fragmented across fraud, payments, and technology teams, maturity measurement is particularly valuable because it exposes where accountability is unclear and where evidence cannot be stitched into a coherent supervisory narrative. Used this way, the DUNNIXER Digital Maturity Assessment helps leadership evaluate sequencing, identify which constraints most threaten auditability, and increase decision confidence when expanding AI-driven transaction decisioning into higher-stakes use cases.

References

- https://www.emburse.com/resources/ai-fraud-detection-in-banking

- https://www.emburse.com/resources/ai-fraud-detection-in-banking#:~:text=In%202026%2C%20AI%20fraud%20detection,suspicious%20activity%20before%20losses%20occur.

- https://www.kellton.com/kellton-tech-blog/ai-in-banking-use-cases-benefits-future-of-finance

- https://www.strategy.com/software/blog/real-time-analytics-for-risk-management-in-banking#:~:text=Core%20Components%20of%20AI%2Dpowered,predict%20risks%20before%20they%20occur

- https://parseur.com/blog/future-of-hitl-ai#:~:text=As%20AI%20capabilities%20advance%2C%20so,human%20oversight%2C%20according%20to%20Naaia.

- https://www.theiia.org/en/content/newsletter/cae-bulletin/cae-bulletin-issue-january-20-2026/#:~:text=Newsletter-,RSM:%20Agentic%20AI%20Emerges%20as%20a%20Strategic%20Accelerator%20for%20Internal,or%20executing%20authorized%2C%20auditable%20tasks.

- https://www.mayerbrown.com/en/insights/publications/2026/01/occ-proposes-higher-threshold-for-applying-heightened-standards#:~:text=On%20December%2023%2C%202025%2C%20the,Standards%20and%20discuss%20the%20Proposal.

- https://tyk.io/blog/2026-the-year-apis-and-ai-become-non-negotiable-for-financial-services/#:~:text=According%20to%20recent%20industry%20surveys,Built%20on%20Open%20Banking%20Rails

- https://www.deloitte.com/middle-east/en/services/consulting/perspectives/2026-ai-predictions-shaping-the-middle-east.html#:~:text=Dr%20Aleksei%20Minin%2C%20head%20of,Uses%20of%20Physical%20AI

- https://home.treasury.gov/news/press-releases/sb0401#:~:text=WASHINGTON%E2%80%94%20In%20support%20of%20the,cybersecurity%20and%20improved%20operational%20resilience.

- https://kpmg.com/us/en/articles/2026/occ-heightened-standards-proposed-amendments-reg-alert.html#:~:text=January%202026,foreign%20bank%20that%20has%20an:

- https://www.bdo.com/insights/industries/fintech/2026-fintech-industry-predictions#:~:text=In%202026%2C%20we%20expect%20to,authentication%20and%20decentralized%20identity%20solutions.

- https://www.linkedin.com/pulse/beyond-jailbreaking-why-indirect-prompt-injection-sewak-ph-d--zkyvf#:~:text=VII.,The%20Post%2DCredits%20Scene

- https://www.radware.com/cyberpedia/prompt-injection/#:~:text=Prompt%20injection%20enables%20attackers%20to,and%20reliable%20responses%20are%20critical.

- https://www.resecurity.com/blog/article/breaking-trust-with-words-prompt-injection-leading-to-simulated-etcpasswd-disclosure#:~:text=Introduction,deployments%20assessed%20during%20security%20audits.

- https://www.linkedin.com/pulse/strategic-blueprint-ai-governance-risk-compliance-gcc-vimal-mani-7yenf#:~:text=Bias/fairness%20risk%20in%20diverse,continuity%2C%20monitoring%2C%20and%20recovery.

- https://qualysec.com/ai-application-security-risks/#:~:text=main%20production%20line.-,1.,keyword%20filters%20and%20pattern%20matching.

- https://www.linkedin.com/pulse/how-auditing-being-transformed-grand-invasion-ai-vimal-mani--giuvf#:~:text=Continuous%20monitoring%20replaces%20point%2Din%2Dtime%20audits.%20AI%20systems%20evolve%20continuously%2C%20demanding%20real%2Dtime%20assurance.

- https://www.persistent.com/blogs/finanalytics-translating-genais-340-billion-value-add-for-banks-into-real-impact/#:~:text=To%20unlock%20this%20opportunity%2C%20banks%20must%20embed,with%20it%20a%20unique%20set%20of%20challenges:

- https://apiiro.com/glossary/agentic-coding/#:~:text=Monitor%20for%20new%20classes%20of%20vulnerabilities:%20Use,toxic%20API%20combinations%20or%20hidden%20design%20flaws.

- https://today.westlaw.com/Document/Ifb387cb00ea211f189fb9c676fd6cbe1/View/FullText.html?transitionType=CategoryPageItem&contextData=(sc.Default)#:~:text=The%20Financial%20Services%20AI%20Risk%20Management%20Framework,for%20banks%20and%20other%20financial%20services%20companies.

Reviewed by

The Founder & CEO of DUNNIXER and a former IBM Executive Architect with 26+ years in IT strategy and solution architecture. He has led architecture teams across the Middle East & Africa and globally, and also served as a Strategy Director (contract) at EY-Parthenon. Ahmed is an inventor with multiple US patents and an IBM-published author, and he works with CIOs, CDOs, CTOs, and Heads of Digital to replace conflicting transformation narratives with an evidence-based digital maturity baseline, peer benchmark, and prioritized 12–18 month roadmap—delivered consulting-led and platform-powered for repeatability and speed to decision, including an executive/board-ready readout. He writes about digital maturity, benchmarking, application portfolio rationalization, and how leaders prioritize digital and AI investments.