At a Glance

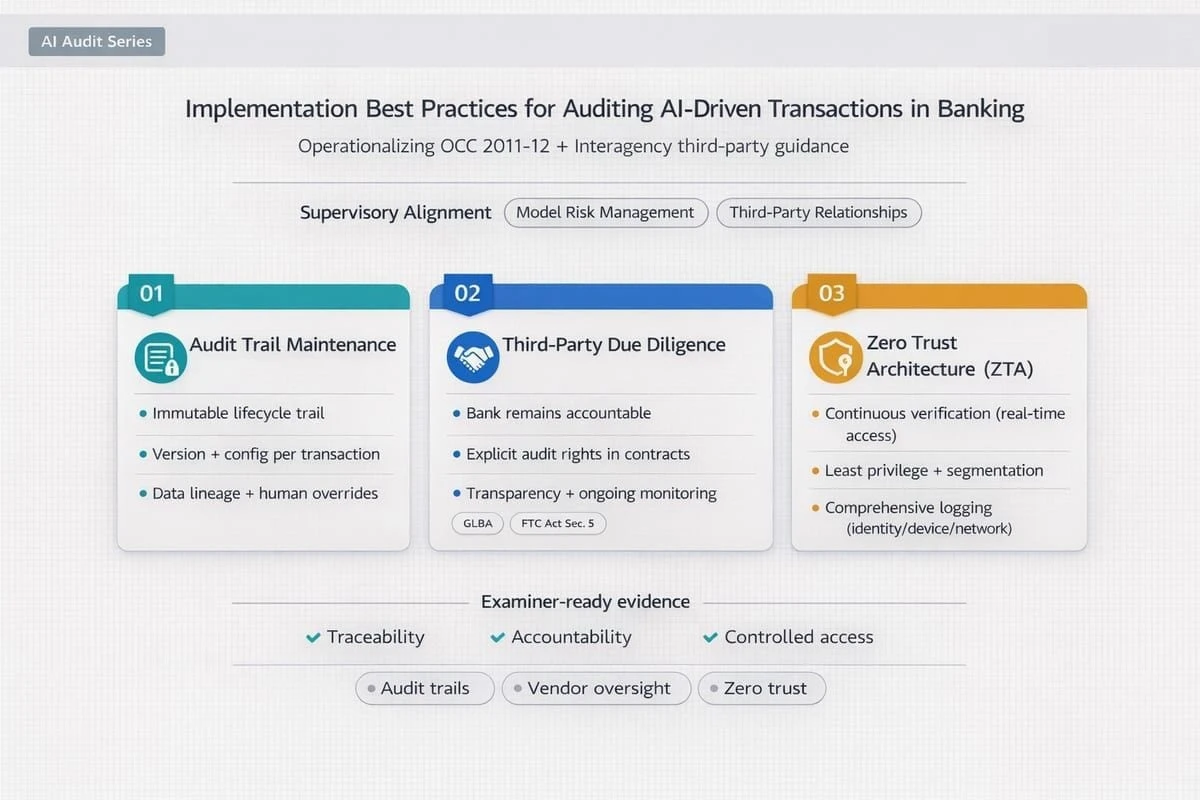

Banks auditing AI-driven transactions should treat auditability as an implementation design problem, not a documentation cleanup exercise. The core requirements are transaction-level traceability, model and prompt version control, human-override evidence, third-party evidence rights, and identity-centric controls for non-human actors.

Why implementation discipline determines whether AI decisions are auditable

When AI is embedded in transaction workflows such as fraud intervention, sanctions filtering, AML triage, or credit routing, the audit question is not whether the bank has a policy. It is whether the bank can reconstruct a specific decision under time pressure: what data was used, which model or orchestration version acted, what controls fired, whether a human intervened, and what evidence is available across internal and third-party components.

That makes auditability an implementation property. If logging is fragmented, model changes are weakly governed, or vendor evidence is hard to obtain, the bank may still have a nominal governance framework but remain unable to defend individual transaction outcomes coherently across the three lines of defense.

Core implementation best practices

Build transaction-level audit trails that support replay

Audit trails should be designed to reconstruct the full path from input to outcome. For each decision, the bank should be able to identify the triggering event, relevant inputs, model or orchestration version, thresholds or prompts used, output produced, control decisions applied, and any human override or escalation. Logging that is only system-level or summary-level is rarely enough for audit, dispute handling, or examiner review.

- Bind every decision to a stable transaction or case identifier.

- Retain model, prompt, threshold, feature, and policy versions used at decision time.

- Record human overrides, approvals, and exception handling with rationale.

- Store logs in tamper-evident form and make them queryable across internal and vendor-operated components.

Treat model and prompt changes as governed production changes

SR 11-7 and related banking guidance make the point indirectly but clearly: model risk management depends on robust development, implementation, validation, governance, and controls. For AI-driven transactions, that means model updates, prompt revisions, threshold changes, retrieval logic changes, and guardrail updates need the same discipline as any other production control change. A transaction can only be audited well if the bank can prove which approved artifact was in force at the time.

Require explanations that are usable in operations, not just available in theory

Explainability is only valuable when investigators, second-line reviewers, and auditors can use it without generating conflicting narratives. Explanations should therefore be retained with the decision record, aligned with policy language, and tested for stability and usefulness in real case handling. An explanation method that exists only in a model toolkit but is not preserved in the operating evidence chain does not materially improve auditability.

Centralize evidence capture where possible

Evidence quality improves when inputs, outputs, metadata, and control decisions are captured through a common instrumentation layer or gateway rather than through inconsistent application-specific logging. Centralized capture is particularly useful where multiple models, vendors, or orchestration components contribute to a single transaction outcome.

Make third-party evidence rights practical, not theoretical

Where external platforms, fintechs, cloud providers, or model providers support transaction decisioning, third-party evidence becomes part of the bank's audit perimeter. The key issue is not whether the vendor claims strong governance. It is whether the bank can obtain the logs, change records, incident evidence, and control documentation needed to investigate outcomes and satisfy supervisory review in a usable timeframe.

- Contract for audit rights, evidence access, and notification of material changes.

- Define which party owns which parts of the decision evidence chain.

- Test retrieval time and usability of vendor evidence before relying on it in production.

- Scale oversight to criticality, decision impact, and transaction volume exposure.

Apply zero trust principles to AI agents and machine identities

If agentic or semi-autonomous AI components can call tools, retrieve data, or take transaction-related actions, they should be governed as first-class identities. NIST zero trust guidance is relevant here because implicit trust creates weak accountability. Each non-human actor should have a unique identity, bounded permissions, clear ownership, revocation capability, and telemetry that supports replay and investigation.

- Assign unique machine identities to each agent or service.

- Enforce least privilege for data, APIs, and actions.

- Use contextual authorization and log every sensitive action.

- Monitor for anomalous access patterns, drift, and unexpected tool usage.

How banking guidance should shape implementation choices

Existing supervisory expectations already provide most of the control logic banks need. SR 11-7 establishes the importance of model governance, validation, and controls. The interagency third-party risk management guidance makes clear that vendor reliance does not reduce the bank's accountability. FFIEC information security guidance reinforces the need for logging, centralized monitoring, and protected audit evidence. NIST AI RMF and NIST zero trust guidance help translate those expectations into operating design choices for modern AI systems.

The implication is straightforward: banks should not wait for a single AI-specific rulebook before improving auditability. They should design AI transaction controls so they can withstand established examination logic around governance, traceability, information security, third-party oversight, and operational resilience.

Readiness questions audit and risk leaders should force early

- Can we replay a specific AI-influenced transaction end to end using preserved evidence rather than narrative reconstruction?

- Can we identify exactly which model, prompt, rule, configuration, and approval state produced the outcome?

- Can we distinguish automated decisions from human overrides and explain both coherently?

- Can we obtain the necessary evidence from third parties inside investigation and reporting windows?

- Are non-human identities governed with clear ownership, bounded access, and actionable telemetry?

Building confidence in implementation readiness

A maturity lens is useful because auditability usually fails at the seams: fragmented logs, weak third-party evidence access, unclear machine identity governance, or inconsistent model change control. Measuring those capabilities together gives leaders a clearer view of whether AI can be extended safely into higher-impact transaction workflows or whether the control environment would fragment under stress.

Used for that purpose, the DUNNIXER Digital Maturity Assessment helps leadership determine whether audit trail design, third-party evidence access, model governance, and identity controls are strong enough to support defensible AI transaction decisioning at scale.

References

- Federal Reserve SR 11-7: Guidance on Model Risk Management

- FDIC: Adoption of Supervisory Guidance on Model Risk Management

- OCC Bulletin 2023-17: Third-Party Relationships - Interagency Guidance on Risk Management

- FFIEC IT Examination Handbook: Information Security Booklet

- NIST AI RMF Playbook

- NIST AI RMF Playbook: Map Function

- NIST AI RMF: Generative AI Profile

- NIST SP 800-207: Zero Trust Architecture

- NIST: Planning a Zero Trust Architecture - A Starting Guide for Federal Administrators

- FDIC / Federal Reserve / OCC Request for Information on Financial Institutions' Use of AI

Reviewed by

The Founder & CEO of DUNNIXER and a former IBM Executive Architect with 26+ years in IT strategy and solution architecture. He has led architecture teams across the Middle East & Africa and globally, and also served as a Strategy Director (contract) at EY-Parthenon. Ahmed is an inventor with multiple US patents and an IBM-published author, and he works with CIOs, CDOs, CTOs, and Heads of Digital to replace conflicting transformation narratives with an evidence-based digital maturity baseline, peer benchmark, and prioritized 12–18 month roadmap—delivered consulting-led and platform-powered for repeatability and speed to decision, including an executive/board-ready readout. He writes about digital maturity, benchmarking, application portfolio rationalization, and how leaders prioritize digital and AI investments.